When I was twenty-nine, I visited a tarot card reader. It’s not really my thing, but a couple of girlfriends thought it would be a laugh, so we went along together. The reader had a name like Psychic Sue or Transcendental Trudy but turned out to be a perfectly ordinary-looking woman in her mid-thirties, with no more mystical trappings than her pack of cards. My reading began with the standard predictions for a female customer in her late twenties: happy marriage, kids and travel. Then came a card that indicated study. And then another, “more study”. And another, “even more study”. At this point, the tarot lady looked up at me in surprise. “Oh yes,” I said, “it’s because I work in IT.”

At that time in the late 1990s, I’d already worked in IT, or Information Technology, for a good decade. My work life isn’t so different now, almost twenty-five years later. I still design and build corporate computer systems in enterprises small and large. I build servers, develop solutions, troubleshoot faults, tear out my hair over impossible demands, stress my way through intractable issues, and then eventually, somehow, get it all working. The tarot lady was right about the study as there is always a new system or version or technique to learn. Don’t work in IT if you don’t like learning! Happily, the tarot lady was also right about the marriage, kids and travel.

Had she asked me my date of birth, she might have pronounced me destined to work with computers. You see, I was born on a landmark day in the history of human technology. It was the twentieth of July 1969 and, while I took my first breaths, Neil Armstrong and Buzz Aldrin were taking a few of their own as they prepared to walk on the moon.

As is only reasonable, I like to claim at least partial ownership of the moon landing. The invitation to my twenty-first birthday party was the front page of the Sydney Morning Herald with the headline “Moon landing expected today!” In the lead-up to my half-century, I hit up an acquaintance with NASA connections to see if there was any way I could get to the fiftieth-anniversary celebrations. Sadly, NASA didn’t think to invite the moon babies to the party, and I had to make do celebrating with one of the best meals of my life in Tokyo.

The moon landing was a global phenomenon that stopped in their tracks, mouths agape, anyone within range of a television set. An estimated sixth of the world’s population watched the landing,[1] and it remains one of the all-time most-watched televised events. At less than a day old, I was oblivious to the fuss, but I have always been alert to its echoes. Every year, around my birthday, newspaper articles remind us, “On this day in 1969…” Every year, not just the big anniversaries that end in zeros and fives. If I ever grow so doddery I forget how old I am, I’ll just have to wait around for July 20 and some news headline will reveal how many years it’s been since Armstrong’s small step. Perhaps these annual reminders inspired in me a nascent belief that technology has the power to elevate human achievement to the realms of the miraculous.

My birth coincided with what was widely touted as the dawn of the Space Age, but you may have noticed that we’re not yet flying to the moon for holidays or moving to Mars. Instead, we’ve been living through the Personal Computer Age. In a matter of decades, personal computers have gone from a hobbyist’s toy to a device found in the pockets of 80% of the world’s population.[2] These pocket computers, otherwise known as smartphones, have a hundred thousand times the processing power that got Neil Armstrong, Buzz Aldrin and Michael Collins to the moon and back.[3] Personal computing has been, by far, the most influential technological revolution of my lifetime.

In 1969, if anyone asked what the chances were of baby Carol visiting the moon one day, people might have said “pretty good”. The film 2001: A Space Odyssey, released in 1968, includes a scene where tourists fly into space on Pan Am Airlines. Adults in 1969 had been living through their own technological revolution, that of the commercial airline industry. From 1947, if you could afford it, you could fly from Sydney to London via the Qantas Kangaroo Route, which took you three days and six stops to hop your way across the planet. When I was born, Qantas was only two years off launching their one-stop flight from Sydney to London. For the inhabitants of a country with a large population of Europeans who had emigrated by ship, a two-hop, and increasingly affordable, flight to the old world must have seemed extraordinary. It can’t have been much of a stretch to envisage commercial flights to the moon by the new millennium.

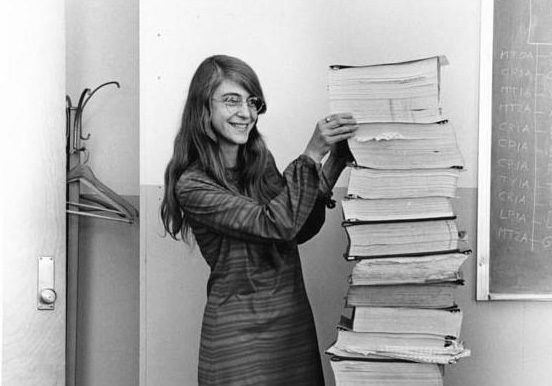

If the question instead concerned baby Carol’s chances of working in computing one day, the odds would have looked at least as good as a lunar long weekend. Thousands of women contributed to the Apollo 11 mission, among them scientists, technicians and computer programmers. The head of NASA’s software development group, and designer of the Apollo 11’s guidance software, was a woman named Margaret Hamilton.[4] Hamilton’s software is credited with “saving the moon landing”,[5] as it worked around a last-minute hardware error while guiding the Eagle through its successful descent. Hamilton’s innovations in error handling and her championing of software engineering as a professional discipline have had an undeniably lasting impact on the industry.

These days, many people are surprised to learn that a woman designed the code for the Apollo mission. I regret to say that, until I started my reading for this book, I was surprised too. I have now also learnt that, in the earliest days of computing, coding the instructions into computers was considered “women’s work”, akin to operating a typewriter or telephone exchange. Men did the manly design and build of the machines, then left the tedious job of making them do something to women. It was women, with no manuals and scarce instruction, who invented programming.

The very earliest computers were built in the 1940s as part of the US involvement in World War II. One of the first was the Electronic Numerical Integrator and Computer, commissioned by the US Army to calculate missile trajectories. It was built at the University of Pennsylvania and painstakingly re-configured for each calculation by a team of women known as the ENIAC Six.[6] Around the same time, the one-woman powerhouse that was Grace Hopper was working on a Navy-sponsored project, developing a punch-tape technique to program the Harvard Mark I computer.[7]

From such beginnings evolved the mainframe computers developed by commercial manufacturers such as IBM. Mainframes were designed for large-scale repetitive transactions like processing sales, generating invoices, or tracking inventory. The mainframe computer, individually built on commission in the 1950s, really took off in the 1960s as big enterprises invested in the technology, hoping it would give them a competitive edge. As an edge, it did not come cheap. In 1965, an IBM mainframe cost upwards of $2.2 million to buy,[8] and needed a custom-built, climate-controlled room to house it. Even after that enormous outlay, the mainframe was useless without the people who knew how to program it. The original mainframes came with no code and had to be laboriously programmed, from scratch, in ways unique to the vendor and even to the model.

As demand grew for programmers, so too did the pay and status of the job. Working as a programmer became an increasingly attractive prospect for men, though it was by no means unusual to find female programmers in the computer rooms of the 1960s. In 1967, Cosmopolitan magazine published an article titled “The Computer Girls”, which encouraged young readers to consider a career in programming:

Twenty years ago, a girl could be a secretary, a school teacher… maybe a librarian, a social worker or a nurse… Now have come the big, dazzling computers – and a whole new kind of work for women: programming… Anything from predicting the weather to sending out billing notices from the local department store. And if it doesn’t sound like woman’s work – well, it just is.[9]

The article quotes Grace Hopper herself, who compared programming to the organisation, creativity and eye for detail that goes into hosting a dinner party. But despite Hopper’s and Cosmopolitan’s encouraging tones, programming was already well on its way to being rebranded as “men’s work”.

In 1969, IBM ran a series of ads in US newspapers offering paid programmer traineeships. The ads list the characteristics of a suitable candidate, which include enjoying logic puzzles, bridge, and musical composition, as well as having a “lively imagination”. Nothing in the list is gender-specific, and a woman may well have considered applying had she got past the large and eye-catching headline:

Are YOU the man to command electronic giants?[10]

Even so, the numbers of women working in computing continued to climb steadily reaching 31% in 1990, just around the time I was entering the work force.[11] And then the percentage dropped and continued to fall. Today the percentage of women working in computing remains stubbornly around 20% in most western OECD countries including Australia, the UK and the USA. For much of my career, it became less likely every year that I would encounter other women doing the type of hands-on technical work that I do.

Sometimes, people asked me why there were so few women in IT, like I should know. They were only asking so they could tell me their own theory anyway. “Women think computers are boring” or “They’re put off because they think we’re all nerds” or “It’s because girls don’t like to tinker and play with computers the way boys do.” None of these ideas ever felt right, particularly because they left no room for me. I’ll happily admit to being a nerd, but I don’t “tinker” just for the sake of it, and I only find tech interesting when I have a good use for it. I’d shrug at whatever over-simplified generalisation I’d just been treated to, and get back to work.

Now I want better answers. Other professional occupations have continued to track towards gender parity so what’s the deal with computing? What has made a career in IT an unattractive option for so many young women? What it is about this industry that women who do join the sector are more likely to quit? And, the most niggling question of all, is it actually that I’m the strange one here?

Time to find out.

[1] https://www.wired.com/story/world-watched-apollo-11-moon-photos.

[2] https://www.statista.com/statistics/330695/number-of-smartphone-users-worldwide, August 2022.

[3] https://theconversation.com/would-your-mobile-phone-be-powerful-enough-to-get-you-to-the-moon-115933.

[4] https://www.smithsonianmag.com/smithsonian-institution/margaret-hamilton-led-nasa-software-team-landed-astronauts-moon-180971575.

[5] https://interestingengineering.com/innovation/margaret-hamilton-software-engineer-who-saved-the-moon-landing.

[6] https://www.codecademy.com/resources/blog/eniac-six-women-programmed-computer/.

[7] https://news.yale.edu/2017/02/10/grace-murray-hopper-1906-1992-legacy-innovation-and-service.

[8] https://www.ibm.com/ibm/history/exhibits/mainframe/mainframe_PP2075.html.

[9] Lois Mandel, “The Computer Girls”, Cosmopolitan, April 1967.

[10] IBM ad, New York Times, May 1969. Taken from Ensmenger, Aspray and Misa, The Computer Boys Take Over: Computers, Programmers, and the Politics of Technical Expertise, 2010.

[11] Julia Beckhusen, Occupations in Information Technology, 2016, figure 7.

Leave a Reply